It’s hard to deny that AI has taken over. Many consumers, myself included, are rightfully becoming more and more skeptical when scrutinizing art and writing. Did an actual person with real talent and skills create it? Or did an AI prompt spit something out in a matter of seconds for a human to slap their name on and claim as their own?

This year, for the first time, I’ve had to find new ways to communicate my transparency with fans, followers, and customers in regard to my stance on AI use in my books and art. My thoughts about AI are drastically different now than they were in my commentary when I first weighed in on the topic back in July 2024. The evolution was fast, and I underestimated the impact.

**Let me note that when I refer to “AI,” I’m talking about large language models (LLMs). These are not technically considered true artificial intelligence capable of independent thought, but most people are referring to them as AI.**

Frankly, it’s exhausting. And it’s getting harder to identify AI. Real artists have had their reputations dragged through the mud after being falsely accused while self-proclaimed “AI artists” manage to cash in on society’s insatiable demand for more content and instant gratification.

When I published my first novel in 2018, I had no idea how much the publishing industry would change in eight years. Even when I released the third novel in my series in early 2023, I didn’t really see the AI movement as a serious threat encroaching on the book market.

But now, with the publication of my fourth novel, it’s an entirely different world. AI-generated books are flooding the market, and authors are having to adapt as best as we can. However, with a frustrating lack of transparency and regulation around AI, our options are limited.

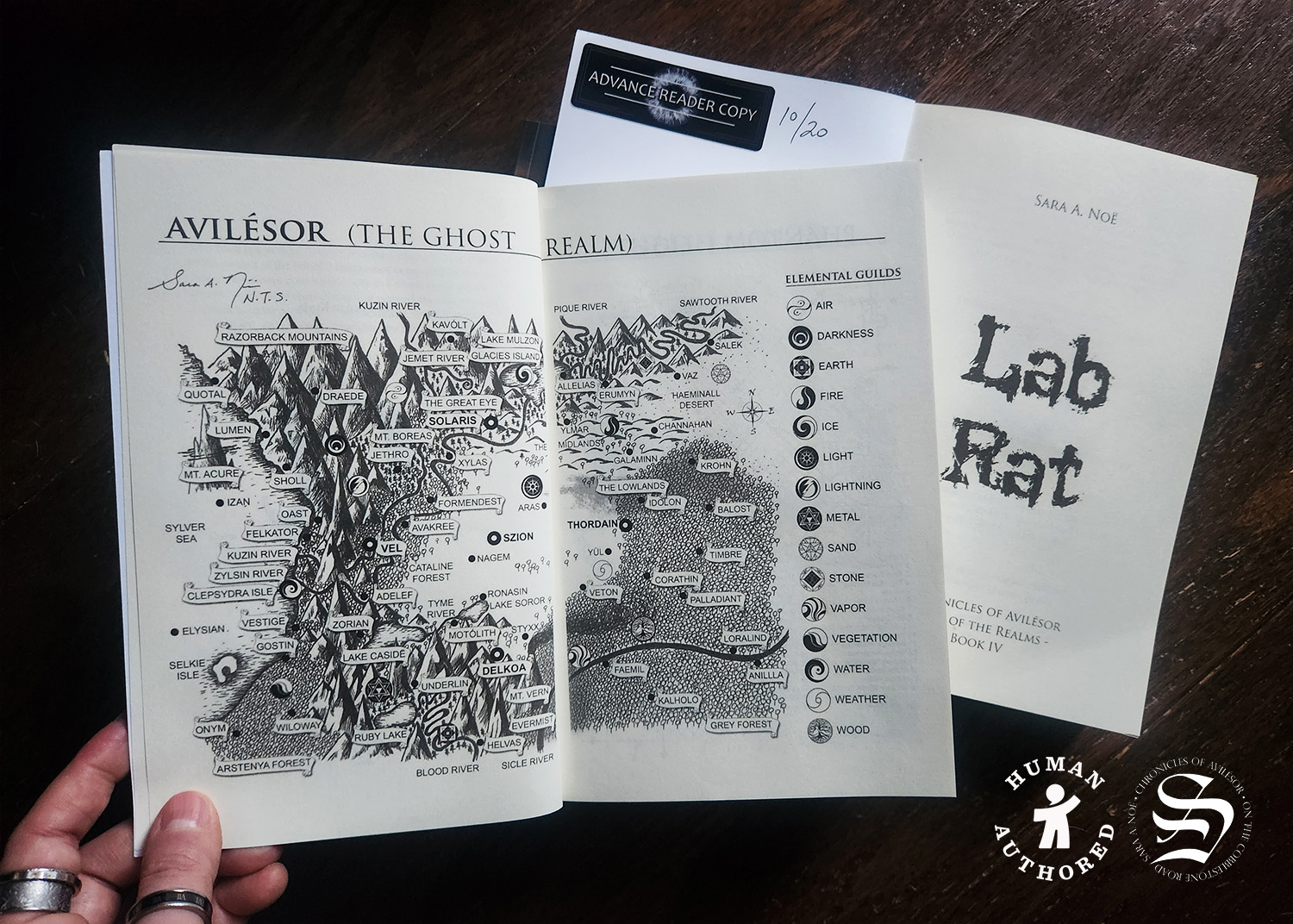

This is why, when a local bookstore owner made me aware of the human-authored program from the Authors Guild, I decided to certify my latest novel. In this article, I’ll discuss what the certification means, address some of the (extremely valid) concerns about the initiative in the author community, and share my perspective about the demand for books written by actual human beings.

- What is the Human-Authored Program (And Why is it Relevant)?

- Concerns in the Author Community

- 1. The process is backwards; books should have clear disclaimers if they’re AI, not the other way around.

- 2. The honor system is hard to enforce and easy to abuse.

- 3. Making authors pay for certification is an unjust extra financial burden.

- 4. If some AI is allowed, where is the line drawn?

- 5. What if the voluntary database makes people assume that authors who didn’t choose to certify must have used AI?

- Do Readers and Retailers Care About Human-Authored Books?

This article may contain affiliate links. To learn more about how these links are used on this website, read the affiliate disclosure.

What is the Human-Authored Program (And Why is it Relevant)?

The Authors Guild is the oldest and largest professional organization for writers in the United States. It was founded in 1912 with a mission of advocating for the rights of writers and authors while providing members with resources to help them navigate the publishing industry. Specifically, its primary focus is on free speech, copyright protection, and fair contracts.

The Guild launched their new “human-authored” certification in January 2025 with the goal of “preserving authenticity in literature” in an attempt to push back against AI-generated books. Their official tagline is:

“Distinguish human creativity in an increasingly AI world.”

Members could certify their books to be included in a searchable public database by registration number, book title, author name, and ISBN. Going through this certification process also granted access for authors to use the official certification mark on their book cover, copyright page, website, marketing and promotional materials, etc. However, participation in the beta program was reserved for members only.

Recently, the Author’s Guild partnered with the UK’s Society of Authors and expanded the human-authored program to include non-members in March 2026.

Authors who choose to participate must verify their identity when they certify their book. Upon completion, they receive a registration number that can be included with the book’s copyright information. Anyone who wants to search for human authenticity is able to access the database. Here is a screenshot of what the database displays when you search for the title that I registered:

As you can see, it’s a very simple search result. It doesn’t reveal much about the book or the author, only that the title has been registered with the Authors Guild.

I believe this certification is just one small step to confront a much larger problem. It’s not a perfect solution, but in my opinion, it’s better than doing nothing against the AI monster. From in-person conversations to online chatter, I’m seeing more and more readers actively pushing back against AI and seeking out books written by actual human beings.

AI-generated books are not being cleared labeled. There’s not even a vague disclaimer at the bottom of a product page. I’ll admit that it feels downright ridiculous to label my books as “human-authored” because that should be the default, not the exception… but until AI books become more transparent, it’s up to authors to be upfront about how our books are created. Not necessarily for our sake, but for the sake of readers who are hunting for AI information and failing to find it when shopping for new books.

Authors are not responsible for that failure. The industry is. However, we now have a tool that we can use to help alleviate the problem. At least, to some extent.

Concerns in the Author Community

The human-authored initiative has received mixed feedback among authors. While some approve of The Authors Guild taking a stand against AI-generated books and developing a way to communicate an anti-AI disclaimer to readers and retailers, other are skeptical and downright resentful for several reasons.

Some of the biggest concerns I’ve seen vocalized in online forums:

- The onus should be placed on AI books, not books written by real people, to clearly communicate how the book was created.

- The human-authored certification is based on the honor system, making it susceptible to abuse.

- Authors have to pay to be certified, either by committing to an annual membership fee with the Authors Guild or paying per registered title.

- There’s a gray area about how much AI usage is allowed to still qualify as human-authored.

- People might start relying too heavily on the database and make the incorrect assumption that exclusion equals AI-generated.

These are all completely valid concerns. Although I can’t speak for the entire indie author community, here are my personal thoughts on each of these issues:

1. The process is backwards; books should have clear disclaimers if they’re AI, not the other way around.

I 100% agree. This shouldn’t even be a debate.

I’ve spoken to readers and bookstore owners who are actively trying to find human-authored books while avoiding AI-generated ones. And that is easier said than done.

Governments around the world, not just here in the US, have dragged their feet when it comes to regulating AI and clearly identifying its usage to consumers. When I published my latest novel, I had to indicate whether or not I had used generative AI as part of the creation process. If I checked yes, then I had to provide additional information about how that AI was used. (But since I checked no, that step was irrelevant.) I had to do this on all three of the distribution platforms I use: IngramSpark, KDP (Amazon’s company), and Draft2Digital.

For example, here is what that looks like on KDP’s platform when uploading my ebook:

However, when you go to the book’s listing on Amazon and review the product details, there’s absolutely no mention anywhere about whether or not AI was used:

Why does Amazon ask authors/publishers to disclose AI use if that information isn’t then communicated to customers? As far as I can tell, this seems to be the case everywhere the book is listed for sale, even with my wholesale distributor.

I know people who have purchased a book online, thinking it was written by a real person, only to realize that it was an AI book and all of the “author” information led to fake online accounts and websites. There was no disclaimer. No indication in the product listing.

That’s not right.

However… that’s the way it is in the publishing industry right now. Authors can either accept that reality and do their best to take extra steps to let readers know that they’re human, or they can stew about the situation and do nothing out of spite.

The burden should NOT be on us. But it is.

The question is… what are we going to do about it? Sit around and wait, hoping and praying that something changes as more and more AI-generated books flood the market without any labels or disclaimers?

I decided to be as transparent as possible, which is the point of the human-authored project. Will potential readers, bookstore owners, librarians, retailers, etc. look at my copyright page and see the human-authored logo?

Maybe. Maybe not. But it’s there, and if anyone is seeking confirmation about whether my book is AI or not, they can find it fairly easily with all of the relevant copyright information, including the ISBNs, LCCN, and publication year.

Will anyone ever search for the book or my name in the database? I don’t know. But again, the information is out there. What is there to lose?

2. The honor system is hard to enforce and easy to abuse.

Unfortunately, AI detection tools aren’t reliable enough, and they’re having to evolve constantly as LLMs do. The LA Times published an article about an experiment last year testing various AI detection tools on five pieces of writing. One was handwritten from scratch. Two were generated by ChatGPT. One was AI-generated but then edited by a person, and the last one was human-authored but then cleaned up using a grammar tool.

The results? All over the place.

One tool flagged the human-written piece as AI while that same tool gave the ChatGPT article a “mostly human” pass. Three detection tools thought the human-authored article that had been refined with a grammar tool was likely AI-generated. The blog post that had been written by AI and then lightly edited by a person received a green light.

When comparing the exact same samples, one tool provided a 92% “human” score while another said it was “99.7% likely AI.”

The experiment led the author to five conclusions:

- Clean writing tends to fool AI detectors.

- Most of the tools were successfully fooled when an AI-written piece was edited by a person.

- Using a grammar checker on human writing had a negative impact on the AI detection score.

- Free paraphrasing tools also caused the detectors to flag the writing.

- The detection tools were inconsistent and offered contradictory results.

So… what is the Authors Guild supposed to do? How can they verify authenticity when the AI-detection tools are all over the map?

Jane Friedman, who was named Publishing Commentator of the Year in 2023 by Digital Book World, reached out to the Authors Guild to express her concerns over this matter. Mary Rasenberger from the Authors Guild responded:

We have protected against scammers by (1) requiring non-members to be verified through a well-known, third party identity verification system, (2) limiting the number of books that any one person can certify in a year without reaching out to us for an exception, (3) charging a small fee which will discourage scammers, and (4) providing a unique registration number for each title, with a public searchable database of registered titles, so that any use of a certification can be checked.

We also prevent fraudulent certification by requiring all users to sign a license agreement for each title registered that makes the user represent and warrant that the title was “Human Authored”—meaning the text of the work itself (excluding the table of contents or index) was written by one or more humans (excepting de minimis use for spell check and editing). As we clearly state in the contract, if a licensee-author registers a book that the author knows contains AI generated text, they will be liable for breach of contract, trademark infringement, and likely consumer fraud under various laws. We believe that should disincentivize authors who use AI to generate text for them from registering their books. The Human Authored certification is not a requirement after all—it is only for those who wish to distinguish their work. In addition, we also continue to look at AI detection services and may introduce one in the future. We will likely have to raise fees though if we do.

It’s not a perfect solution, and the Authors Guild is aware of that. When and if better tools become available, the Guild is open to incorporating them into the certification process. In the meantime, the Guild has evaluated available options and taken steps to make sure a human-authored certification has meaning. It’s not just a free logo that anyone can download.

3. Making authors pay for certification is an unjust extra financial burden.

Let me just say… I get it. As an indie author, I operate on an extremely tight budget and take on as many tasks as I can to save money rather than outsourcing to freelancers. (That, and I’ll admit that I’m a bit of a control freak and a perfectionist, so… I have some trust issues with my work.)

Most of my publishing expenses go to the line edit, ebook format, and ISBNs. I do my own cover art, maps, and interior print layouts. Although there are benefits to joining the Authors Guild, such as access to legal resources; web services such as website-building software and site hosting; liability insurance; business discounts; networking with global, national, and regional chapters; education opportunities; and general author advocacy, I’m not keen on spending an extra $149 each year when that money can go toward vendor fees, publishing costs, and other essential business expenses.

But for non-members, the cost to register a title in the human-authored database is only $10. I think that’s an extremely reasonable fee that isn’t going to break the bank and force an indie author to choose between investing in registration or a different type of business expense.

It’s also important to note that the Authors Guild isn’t simply pocketing that $10 “donation.” As you saw in the quote from Rasenberger’s response above, part of the registration process involves going through a third-party identity verification system (Veriff). Your small contribution helps the Authors Guild pay for that system since you aren’t paying membership dues to use this service they provide.

According to the Author Guild’s FAQ page:

To run a reliable, trustworthy program, we must ensure that the persons certifying that they are the authors of the works registered (and that the work is Human Authored) are in fact who they say they are. As registration is done online, we need to confirm user’s identities online. Veriff is a well-established, trusted individual identification system. We chose the most secure and reliable identity verifier we could. The company complies with all important international standards.

Should authors have to pay to register? On principal… I do understand where this concern comes from. But I respect that the Authors Guild is not charging in order to make a profit. They’re asking for a small amount to help cover operating costs. That’s not unreasonable.

4. If some AI is allowed, where is the line drawn?

Here is where the process gets fuzzy on a couple of levels. First, with AI tools being integrated into just about every software, it’s getting harder for authors to even tell if they’re using AI, and to what extent. Obviously, if you’re generating text and pasting it into your manuscript, that’s one thing. But what if you use tools to check grammar and rephrase sentences? What if you work with an editor who is recommending changes based on generative AI and you aren’t aware when you implement those advised rewrites?

Second, the Authors Guild does allow some AI usage. You can let AI outline your book, as long as you do the writing yourself. Do you agree with that? (Personally… I don’t. But then again, I also have issues with ghost writing and copying formulaic mass-market plots, while other authors don’t, so perhaps I’m just being picky.)

Who decides where to draw the line? Is a book considered to be human-authored even if the plot, characters, and details were conceived by AI and then written by a person? What if you use AI to generate the entire book and then rewrite it so you aren’t actually using the AI-generated text at face value?

According to the Authors Guild usage guidelines:

“The certification mark may only be used in connection with literary works for which the text itself was fully authored by one or more human beings and not generated by AI, except for a de minimis amount (such as through the use of AI-powered spelling and grammar check applications). Use of generative AI to create a table of contents, indices, or other auxiliary parts of a book, or for researching, brainstorming, outlining, or any purposes other than generating text does not disqualify a work from being Human Authored.”

It’s a messy gray area, so I absolutely understand authors’ frustration. There are no clear-cut guidelines about how much AI can be used in the conceptual phase of writing a book.

Nobody ever claimed that the human-authored project was a perfect solution. With how rapidly AI is evolving and integrating into everyday tools, often without the users’ knowledge, that gray area is just getting muddier and muddier. I do commend the Guild’s efforts to help authors differentiate their books from AI-generated slop, even if the initiative does still have some faults.

5. What if the voluntary database makes people assume that authors who didn’t choose to certify must have used AI?

Although this is also a valid concern, trying to predict public assumptions is dangerous thinking. Will some people who don’t fully understand the intention of the human-authored database jump to the wrong conclusion if they search for a book or author and can’t find them? Possibly.

But, as someone who has worked with the general public in a variety of customer service jobs and regularly interacts with people who shop in my booth at festivals, conventions, markets, et cetera, let me just say that there is no possible way to fix this problem 100% of the time. If you clearly label every product with a price tag, people will still ask you how much something costs. If you put out informational cards and signs, people won’t read them.

Heck, one of the most surprising challenges I faced when I started selling my books in person was communicating that I was the author of the book series I was selling. I thought that was a no-brainer, but the number of times people acted surprised and initially affronted when I offered to sign their books after they committed to making the purchase shocked me. Perhaps it was because I had a tendency to remove myself from the conversation and talk about “this book series” instead of “my books” so I didn’t seem quite as biased and self-centered… but I still have a hard time wrapping my head around the fact that people apparently thought I just REALLY loved this particular series that nobody had ever heard of. Like… if I were going to theme my entire booth around someone else’s series, I would have picked one that had a strong, well-established fan base, like Lord of the Rings. Not some obscure indie series that required way more time and effort to pitch to people. Seriously, what a terrible marketing idea!!

I will personally attest that trying to guess what people are going to assume and how they’ll react will drive you crazy. You can pay attention to conversations and patterns and do your best to address those issues as they arise, but that’s about it. As the saying goes, “I can explain it to you, but I can’t understand it for you.”

The human-authored project is meant to help increase transparency for authors who want to clearly convey their position to potential readers. It doesn’t mean that exclusion automatically equals AI. We’re already entering a society where many readers pressume that AI was used in some capacity, even if a book is not labeled one way or another. I suspect that assumption, whether it’s conscious or not, will become even more prevalent as AI continues to encroach on the creative process.

Do Readers and Retailers Care About Human-Authored Books?

I feel confident in saying yes. The majority does care.

I’ve talked to two local bookstore owners who have taken strong stances against AI-generated books and expressed frustration about the lack of transparency from wholesale distributors. They are having to spend more time researching books and looking for red flags when they order, and even that extra due diligence isn’t always enough.

The same goes for readers. Since retailers like Amazon aren’t clearly flagging AI books, the only way for a reader to semi-accurately gauge whether a book was written by a real person or a large language model is to read the reviews… which is only beneficial if other readers already made the mistake of purchasing the AI book and then taking the time to warn others by writing a review.

It’s not a hard concept. If a human couldn’t be bothered to write the book, then why should a human be bothered to read it?

The tech industry pushed the idea of AI onto the public with so much force (and an ungodly amount of money, propaganda, and unrealistic expectations)… but the public has been pushing back. They haven’t swallowed the pill despite every effort to shove it down our throats. Some have, sure. But not the general public as a collective.

Renowned economist Ruchir Sharma uses what he calls “the four O’s” to diagnose “bubbles” that are at risk of popping. And guess what? The AI bubble shows warning signs that all four metrics—overinvestment, overvaluation, over-ownership, and over-leverage—are about to burst. According to an MIT report, 95% of organizations that invested in AI are experiencing zero return.

Even outside signs are visible to those of us who aren’t directly plugged in to the tech industry. Disney canceled a $1 billion licensing partnership with OpenAI when the Sora AI video-generating app shut down less than six months after its launch. AMC refused to show the winner of an AI short film contest, titled Thanksgiving Day, in their theaters nationwide after receiving backlash when the contest prize was announced. More than a dozen lawsuits have been brought against AI companies for copyright infringement on behalf of authors, artists, and publishers. (The Authors Guild is involved in one against OpenAI and Microsoft.)

As an artist and author, I have admittedly been discouraged by the AI boom devaluing art at every turn and prioritizing quantity over quality. For real artists who are passionate about what they do, it’s not about money or fame (although it would be nice for artists to be able to make a livable wage doing what they love instead of being forced to treat it as a side hustle while working a “real” job).

There’s something inherently human about creating art. We are inspired, and we inspire each other. LLMs can’t capture that. They can’t even authentically recreate it.

I think readers understand that. At least, the readers who appreciate genuine storytelling. For those who consume variations of the same stories told over and over and over again… I don’t know. Maybe AI actually has a niche to fill there when it comes to mass-producing shallow, formulaic books built on patterns and nostalgia. But I do genuinely believe that most readers who enjoy books are able to connect with stories on a deeper level that can only come from an author pouring their soul into their work.

I do think that AI is going to drive a deeper wedge between rapid-release authors whose business model relies on quantity vs. more traditional authors who spend a lot of time focusing on worldbuilding, character development, and other elements that require more thought and planning. This is purely my own opinion, but I would argue that one fan base is more likely to be forgiving of AI books as long as those readers can rapidly consume familiar content, while the other will be more faithful to author creativity, even if that means they have to wait a few years between book releases.

What do you think? Should authors label their work to help people feel confident that the books they’re buying and reading were actually created by passionate human beings? Is the human-authored certification by the Authors Guild a step in the right direction, or the wrong direction?

I'm an award-winning fantasy author, artist, and photographer from La Porte, Indiana. My poetry, short fiction, and memoir works have been featured in various anthologies and journals since 2005, and several of my poems are available in the Indiana Poetry Archives. The first three novels in my Chronicles of Avilésor: War of the Realms series have received awards from Literary Titan.

After some time working as a freelance writer, I was shocked by how many website articles are actually written by paid "ghost writers" but published under the byline of a different author. It was a jolt seeing my articles presented as if they were written by a high-profile CEO or an industry expert with decades of experience. I'll be honest; it felt slimy and dishonest. I had none of the credentials readers assumed the author of the article actually had. Ghost writing is a perfectly legal, astonishingly common practice, and now, AI has entered the playing field to further muddy the waters. It's hard to trust who (or what) actually wrote the content you'll read online these days.

That's not the case here at On The Cobblestone Road. I do not and never will pay a ghost writer, then slap my name on their work as if I'd written it. This website is 100% authentic. No outsourcing. No ghost writing. No AI-generated content. It's just me... as it should be.

If you would like to support my work, check out the Support The Creator page for more information. Thank you for finding my website! 🖤